Docker

What is Docker ?

Docker is a tool that helps developers run applications in isolated environments called containers. These containers package everything an app needs to run — code, libraries, and settings. As a result, apps work the same on any system.

Why Use Docker ?

- Docker containers ensure consistent application behavior across different operating systems.

- They use minimal system resources by running as lightweight, isolated units.

- Moreover, setting up environments becomes fast, repeatable, and easy to manage.

In short, Docker enables teams to build, share, and deploy applications more efficiently and with greater reliability.

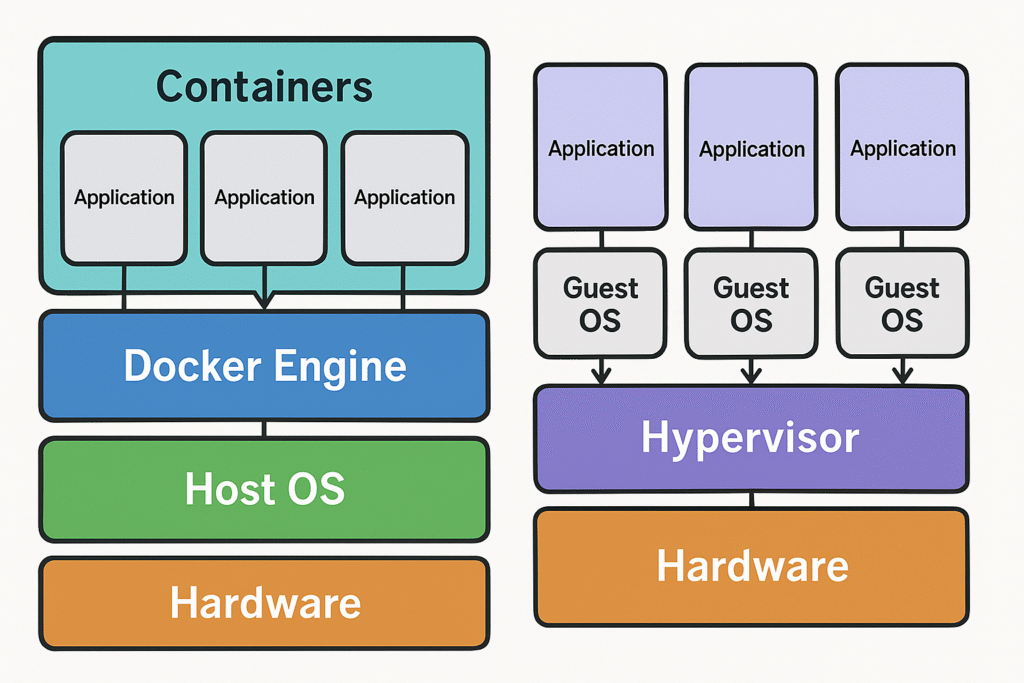

Docker vs Virtual Machines

When comparing Docker containers and Virtual Machines (VMs), the fundamental difference lies in how they operate and manage resources. Docker containers package applications along with their dependencies, but instead of running a full operating system, they share the host operating system’s kernel. As a result, Linux-based applications can run in Docker only if the host system uses a Linux kernel; likewise, Windows-based containers require a Windows kernel. Because of this architecture, containers are lightweight, quick to start, and highly portable—making them ideal for microservices, continuous integration, and rapid deployment scenarios.

In contrast, Virtual Machines emulate complete hardware environments, each running its own guest operating system on top of a hypervisor. This approach offers stronger isolation and the flexibility to run different operating systems side by side. However, this comes at the cost of increased resource consumption and slower startup times compared to containers.

Key Concepts

Before diving into how Docker works, it’s important to understand a few key terms that you’ll encounter frequently.

Containers

A container is a lightweight, standalone environment that runs a specific piece of software. In fact, it includes everything needed to make the application work—its code, runtime, system tools, libraries, as well as configuration settings. Because of this, you can run the same container anywhere without worrying about missing dependencies.

Unlike virtual machines, however, containers share the host operating system’s kernel, which means they start up quickly and use fewer system resources. At the same time, each container remains isolated from the others, so one application cannot interfere with another. As a result, containerization provides both speed and reliability, making it perfect for modern software deployment.

Image

An image is a snapshot of everything needed to run a container. It includes:

- App code

- System dependencies

- Configuration files

- Startup instructions

Think of it like a recipe. When Docker runs an image, it creates a live container from that image.

Images are read-only, which means you can create many containers from the same image without conflicts.

Dockerfile

A Dockerfile is a plain text file with a list of instructions to build a Docker image.It defines:

The base operating system

Software packages to install

Files to copy into the image

Environment variables

The command to run on startup

When you run docker build, Docker reads the Dockerfile and builds an image based on your instructions.

Volume

A volume stores data outside a container’s internal filesystem.

You’ll often use volumes for:

Databases

Log files

App-generated data

Why does this matter?

By default, deleting a container also deletes its data. Volumes solve this by providing persistent storage. They help you keep important data even after the container is removed.

Docker Engine

Docker Engine is the core technology that builds, runs, and manages containers on your computer or server. In fact, you can think of it as the powerhouse that bundles your application, along with all its dependencies, into a consistent package so that it works the same everywhere. Once installed on Windows, macOS, or Linux, it not only allows you to quickly build images from Dockerfiles but also run containers with ease. As a result, you can maintain reliable, portable environments while avoiding the dreaded “it works on my machine” problem.

Port

A port works like a doorway that lets your container talk to the outside world—like your browser or another app.

For example, let’s say your web app runs inside the container on port 80 (the default for websites).

You want to access it on your local machine using port 8080.

To do this, you tell Docker:

>> docker run -p 8080:80 myappThis command means:

“Map port 80 inside the container to port 8080 on my machine.”

Now, when you visit localhost:8080 in your browser, you’ll see your app running from inside the container.

Docker Hub

Docker Hub is a cloud-based library and marketplace for container images, where millions of ready-to-use images are stored and shared. You can upload (“push”) your own images for global access or download (“pull”) existing ones to run instantly with Docker Engine. From databases like MySQL to complete app stacks like WordPress, Docker Hub lets you skip complex setups and jump straight into running software in seconds

Get Started: Beginner’s Guide

To truly grasp Docker’s power, you need to get your hands dirty with real projects. This guide walks you through beginner-friendly Docker labs that help you install Docker Engine, run containers from Docker Hub, build your own images, and even share them globally. Each step is simple, practical, and crafted to boost your confidence as you explore containerization.

Install Docker Engine

Start by installing Docker Engine on your Windows, macOS, or Linux machine from the official site https://docs.docker.com/get-started/get-docker. Visit Docker’s official website and follow platform-specific installation instructions. This setup enables you to build and run containers locally.

Pull and Run a Container from Docker Hub

Experience Docker Hub’s magic by pulling and running a ready-made image:

>> docker pull nginx

>> docker run -d -p 8080:80 nginxI suggest exploring other images at https://hub.docker.com

Open your browser at http://localhost:8080 to see the Nginx web server running inside a container.

Build a Simple Docker Image Locally

Let’s create your own image with a static website:

- Create a folder named

docker-siteand inside it, add a subfolder calledhtml. - Place an

index.htmlfile inside thehtmlfolder with any simple webpage content. - Create a

Dockerfilein thedocker-sitefolder containing:

FROM nginx

COPY ./html /usr/share/nginx/html- Build the image and run it:

>> docker build -t my-simple-site .

>> docker run -d -p 8080:80 my-simple-siteVisiting http://localhost:8080 now shows your custom static site.

Push Your Image to Docker Hub

Sharing your creation is easy if you have a Docker Hub account:

- Tag your image with your username:

>> docker tag my-simple-site your-dockerhub-username/my-simple-site- Push the image to Docker Hub:

>> docker push your-dockerhub-username/my-simple-siteNow, your image is accessible globally for others (or yourself on different machines)

Clean Up Your Docker Environment

Keep things tidy with these commands

>> docker ps

>> docker stop <container_id>

>> docker rm <container_id>

>> docker rmi my-simple-siteQuick Docker Command Table

| Command | Description |

|---|---|

| docker ps | List running containers |

| docker ps -a | List all containers |

| docker pull <ImageName:Version> | Download docker image (Version is optional) |

| docker images | List local images |

| docker run <ImageName:Version> | Run docker image in container |

| docker run <ImageName:Version> -d | Run docker image in detach mode |

| docker run <ImageName:Version> -name | Give name to docker container |

| docker run <ImageName:Version> -p:<SystemPort>:<ContainerPort> | Bind System’s port to a Container port (Container port is usually decided by the container) |

| docker exec -it <Container ID> /bin/bash | Enter container terminal |

| docker rmi <ImageName> | Remove image |

| docker start <Container ID> | Start container |

| docker start <Container ID> -i | Start container in interactive mode |

| docker stop <Container ID> | Stop container |

| docker remove <Container ID> | Remove container |

| docker logs | Show logs |

| docker network ls | List docker network |

| docker network create <Name> | Create docker network |

| docker run –net <NetworkName> | Run container inside a docker network |

| docker run -v <SystemPath>:<ContainerPath> | Mount the container path to system path for persistent data |

| docker run -v <Name>:<ContainerPath> | Name the container path mounted to some path on system which is managed by docker |

Advanced Docker: Just a Glimpse

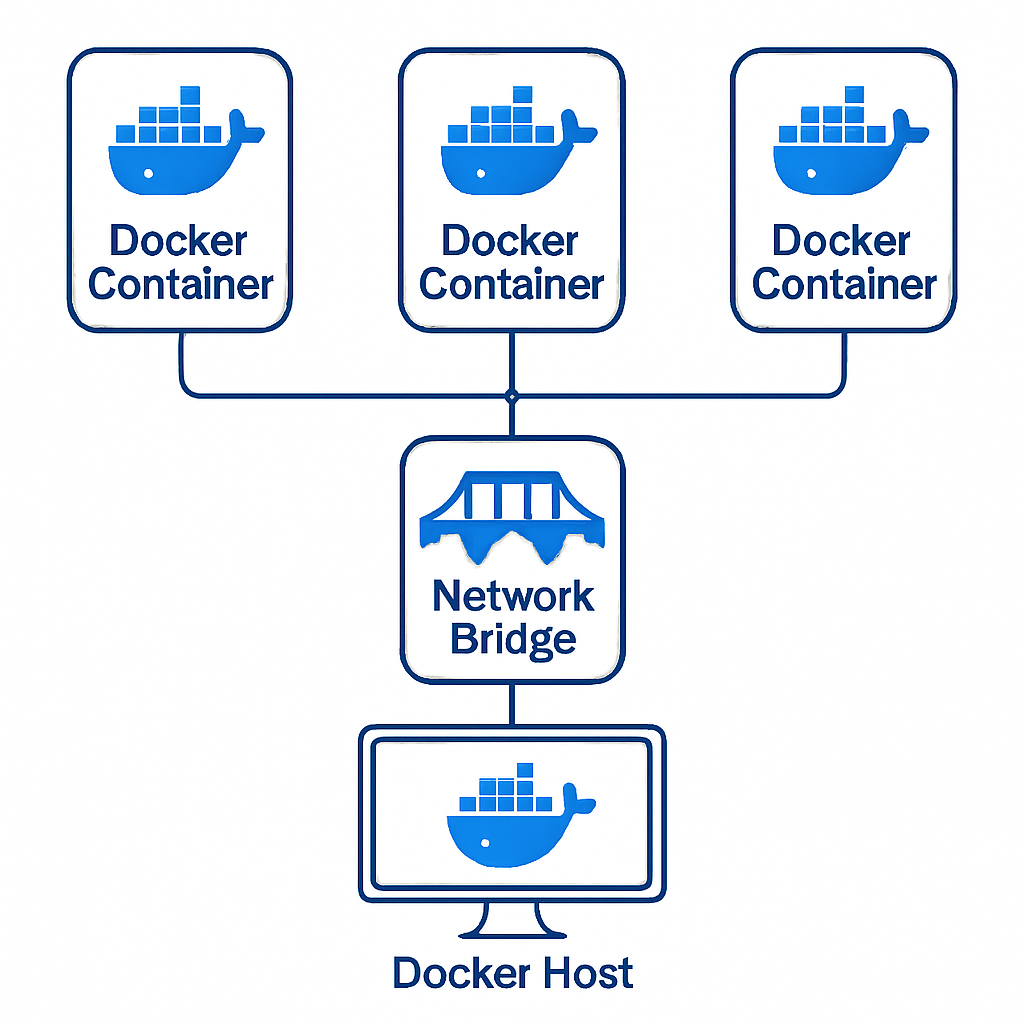

Docker Network

Docker Network empowers containers to communicate securely and efficiently within and across hosts. By default, Docker creates a bridge network where containers can interact, but advanced networking allows you to define custom networks tailored to your needs.

Why Docker Network matters:

Containers often need to work together, such as a web app talking to a database. Docker Network manages connections so they stay isolated from other apps and secure.

Types of Networks:

- Bridge: Default, isolated network on a single host.

- Host: Shares the host’s networking, offering high performance but less isolation.

- Overlay: Enables multi-host container communication, ideal for clustered apps.

- Mac-vlan: Assigns MAC addresses to containers, making them appear as physical devices on the network.

You create networks with commands like docker network create, attach containers with --network flag, and inspect connectivity using docker network inspect.

This network flexibility helps maintain container security, optimize communication, and simulate real-world environments by segmenting your container ecosystem.

Docker Compose

Docker Compose simplifies running complex applications with multiple containers by defining them in a single YAML file. Instead of managing each container individually, you declare how services interact, which images to use, and network or volume setups—all scripted and repeatable.

Why use Docker Compose:

When your app grows beyond one container (e.g., web server plus database plus cache), Compose orchestrates them together with one command.

Key components in docker-compose.yml:

- Services: Containers and their configurations (image, ports, environment variables).

- Networks: Define custom networking beyond default bridge.

- Volumes: Persist data outside container lifecycles.

Example Simple docker-compose.yml

Run the below script using docker-compose up -d. This starts both the web server and the database, linked by Docker networking behind the scenes.

version: '3'

services:

web:

image: nginx

ports:

- "8080:80"

db:

image: mysql

environment:

MYSQL_ROOT_PASSWORD: examplepassword